Rape is probably the most under-reported crime that there is, for all sorts of understandable reasons. Quite apart from the horror of the experience itself, re-living it all in telling it to a police officer is bad enough. Add the possibility that you may have to retell it all again, in courts, in the presence of lawyers, the public – and the man who did it – and being cross-examined about it by a lawyer who is trying to prove that you are lying about it all… This under-reporting has all sorts of effects. Quite apart from what rape does to the victims, it turns rape statistics into very curious things indeed.

For example, if the number of reported rapes in a year goes up, is that a good thing or a bad thing? The obvious answer is: bad. It means there have been more rapes.

The not-very-much-less obvious answer is: good. It means that more of the rapes that happened were reported to the police. There have been many campaigns over the past few years to persuade victims to report what has happened to them; if those campaigns are succeeding, it means, obviously, that the number of reported rapes a year will go up. And this can happen even if the number of actual rapes stays the same or goes down.

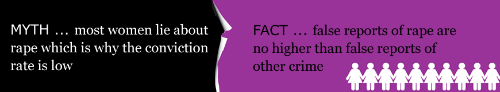

Image: Rapecrisis England & Wales – http://www.rapecrisis.org.uk/reportingrape2.php

So how can you tell from rape statistics which of these two is happening, whether there are more rapes or fewer? The short answer is that without more information, you cannot. Because rape statistics are not rape statistics. They are statistics of reported rape – which are only a small proportion of actual rapes. Does that mean that collecting statistics on rape is a complete waste of time and effort? No – for reasons that will emerge.

Because a new and appalling twist on rape statistics emerged this week. The Independent Police Complaints Commission reported on the practices of Southwark’s Sapphire Unit between July 2008 and September 2009; the Sapphire Unit is the department of the Metropolitan Police that deals with rape. The report was damning. Here are some extracts:

‘This investigation found that during this period the Southwark Sapphire unit was under-performing and over-stretched, and officers of all ranks, often unfamiliar with sexual offence work, felt under pressure to improve performance and meet targets…

The unit adopted its own standard operating procedure designed to encourage officers to take retraction statements from victims in cases where it was thought they might later withdraw or not reach the standard for prosecution.’ Why did the officers do this? The report tells us exactly the reason: ‘By increasing the number of incidents that were then classified as ‘no crime’, sanction-detection rates improved and the performance statistics for the unit benefited.’

In several cases this had truly dreadful consequences. Those consequences included a double murder of two young children by their father – who had been previously accused of rape, only for the accuser to be pressured into retracting her accusation by Sapphire Unit officers, so that no action was taken against the future murderer.

As the IPCC Deputy Chair Deborah Glass put it, “The approach of failing to believe victims in the first instance was wholly inappropriate. The pressure to meet targets as a measure of success, rather than focussing on the outcome for the victim, resulted in the police losing sight of what policing is about – protecting the public and deterring and detecting crime.”

As she could also have put it, two children died because police officers charged with investigating rape reports wanted their unit’s statistics to look good.

Their statistics did indeed look good. In fact they looked far too good. As Channel 4 Home Affairs Correspondent Simon Israel points out, Southwark went rapidly from one of the worst-performing Sapphire areas to one of best. It tripled its detection rates from 10 to 30 per cent. And, as he also points out, no one in the Met queried this very obvious, very sudden, and very unexpected leap.

Bizarrely, they were doing it to falsify their statistics; but the falsified statistics themselves should have shown up, to even a fairly casual inspection, the wrong that they were doing. Only nobody gave the statistics even that casual look.

It is actually very hard to falsify statistics convincingly. All kinds of mathematical techniques can ring alarm bells and show where investigations should be made. A few of them are listed here. But a sudden tripling of apparent detection rates is a pretty big clue.

This failure to look at statistics, or to listen to statistical alarm-bells, has happened before in other contexts. Only a few weeks ago we reported here and here how no-one bothered to look at the death statistics from Stafford Hospital – which were very clearly showing that something was horribly wrong there. Because no-one looked at the statistics things carried on staying horribly wrong for years – and again, people died who should have gone on living.

All of these – health statistics, crime statistics and the rest – come under the general heading of Official Statistics – which you could roughly define as statistics that are collected by civil servants. Official Statistics sounds a dry, dull, tedious subject. It was once suggested that we devote a special issue of Significance magazine to them; I declined on the grounds that I didn’t think anyone would read it. I could well have been wrong. What Southwark, and before that Stafford, are showing is that official statistics matter. They are not just numbers in a file. They are about real people. They are a matter of life and death.